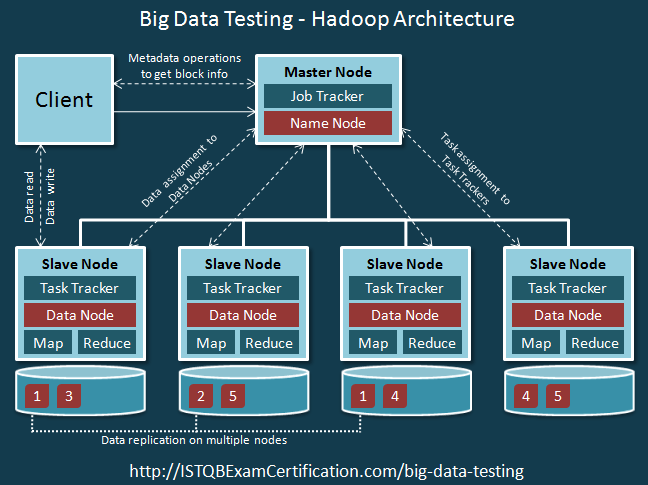

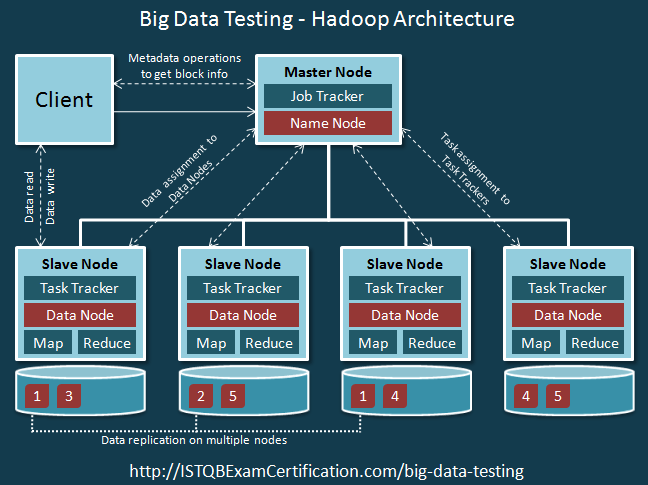

Hadoop Architecture – HBase Database. One of the potential benefits for clients, is a “not only SQL†approach, often referred to as NoSQL approach. HBase is an open-source, distributed, versioned, non-relational database modeled after Google’s Bigtable: A Distributed Storage System for Structured Data, that sits on top of Hadoop. The Hadoop Distributed File System (HDFS) is the primary data storage system used by Hadoop applications. It employs a NameNode and DataNode architecture to implement a distributed file system that provides high-performance access to data across highly scalable Hadoop clusters.

-->

APPLIES TO: SQL Server (Windows only) Azure SQL Database Azure SQL Data Warehouse Parallel Data Warehouse

The article explains how to use PolyBase on a SQL Server instance to query external data in Hadoop.

Prerequisites

- If you haven't installed PolyBase, see PolyBase installation. The installation article explains the prerequisites.

- Starting with SQL Server 2019, you must also enable the PolyBase feature.

-

PolyBase supports two Hadoop providers, Hortonworks Data Platform (HDP) and Cloudera Distributed Hadoop (CDH). Hadoop follows the 'Major.Minor.Version' pattern for its new releases, and all versions within a supported Major and Minor release are supported. The following Hadoop providers are supported:

- Hortonworks HDP 1.3, 2.1-2.6, 3.0 on Linux

- Hortonworks HDP 1.3, 2.1-2.3 on Window Server

- Cloudera CDH 4.3, 5.1 - 5.5, 5.9 - 5.13 on Linux

Note

PolyBase supports Hadoop encryption zones starting with SQL Server 2016 SP1 CU7 and SQL Server 2017 CU3. If you are using PolyBase scale-out groups, all compute nodes must also be on a build that includes support for Haddop encryption zones.

Configure Hadoop connectivity

First, configure SQL Server PolyBase to use your specific Hadoop provider.

-

Run sp_configure with 'hadoop connectivity' and set an appropriate value for your provider. To find the value for your provider, see PolyBase Connectivity Configuration. By Default, the Hadoop connectivity is set to 7.

-

You must restart SQL Server using services.msc. Restarting SQL Server restarts these services:

- SQL Server PolyBase Data Movement Service

- SQL Server PolyBase Engine

Enable pushdown computation

To improve query performance, enable pushdown computation to your Hadoop cluster:

-

Find the file yarn-site.xml in the installation path of SQL Server. Typically, the path is:

-

On the Hadoop machine, find the analogous file in the Hadoop configuration directory. In the file, find and copy the value of the configuration key yarn.application.classpath.

-

On the SQL Server machine, in the yarn-site.xml file, find the yarn.application.classpath property. Paste the value from the Hadoop machine into the value element.

-

For all CDH 5.X versions, you will need to add the mapreduce.application.classpath configuration parameters either to the end of your yarn-site.xml file or into the mapred-site.xml file. HortonWorks includes these configurations within the yarn.application.classpath configurations. See PolyBase configuration for examples.

Configure an external table

To query the data in your Hadoop data source, you must define an external table to use in Transact-SQL queries. The following steps describe how to configure the external table.

-

Create a master key on the database, if one does not already exist. This is required to encrypt the credential secret.

Arguments

PASSWORD ='password'Is the password that is used to encrypt the master key in the database. password must meet the Windows password policy requirements of the computer that is hosting the instance of SQL Server. -

Create a database scoped credential for Kerberos-secured Hadoop clusters.

-

Create an external data source with CREATE EXTERNAL DATA SOURCE.

-

Create an external file format with CREATE EXTERNAL FILE FORMAT.

-

Create an external table pointing to data stored in Hadoop with CREATE EXTERNAL TABLE. In this example, the external data contains car sensor data.

-

Create statistics on an external table.

PolyBase queries

There are three functions that PolyBase is suited for:

- Ad-hoc queries against external tables.

- Importing data.

- Exporting data.

The following queries provide example with fictional car sensor data.

Ad-hoc queries

The following ad-hoc query joins relational with Hadoop data. It selects customers who drive faster than 35 mph,joining structured customer data stored in SQL Server with car sensor data stored in Hadoop.

Importing data

The following query imports external data into SQL Server. This example imports data for fast drivers into SQL Server to do more in-depth analysis. To improve performance, it leverages Columnstore technology.

Exporting data

The following query exports data from SQL Server to Hadoop. To do this, you first have to enable PolyBase export. The create an external table for the destination before exporting data to it.

View PolyBase objects in SSMS

In SSMS, external tables are displayed in a separate folder External Tables. External data sources and external file formats are in subfolders under External Resources.

Next steps

Explore more ways to use and monitor PolyBase in the following articles:

PolyBase scale-out groups.

PolyBase troubleshooting.

PolyBase troubleshooting.

I have installed hadoop 2.7.2 in pseudo-distributed mode(machine-1).I want to add a new datanode to it to make it as a cluster.As, but the problem is both of the machine has differnet disk partitions.

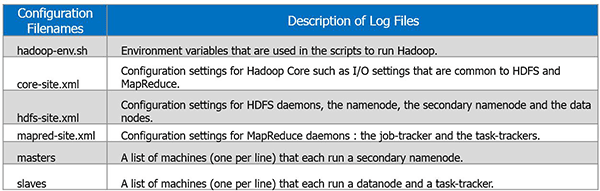

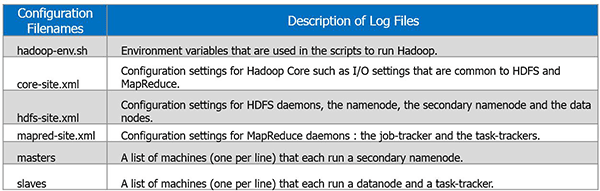

I installed same version hadoop 2.7.2 in new data node(machine-2) and also can ssh with machine-1.After googling many websites, all have common tutorials mentioning that, we have to have the same configurations files inside

/etc/hadoop/ folder.

With the above said, my existing configurations in machine-1 are:

core-site.xml

hdfs-site.xml:

/home1 is a disk mounted in machine1.

Machine-2 has two disk mounted namely /hdd1 and /hdd2.

Now, what should i specify in

hdfs-site.xml on the new machine(machine-2) to make use of both hdd1 and hdd2?

should the value of

dfs.data.dir of all nodes needs to be same?

Is the

dfs.namenode.name.dir property required on hdfs-site.xml on machine2(since it is not a name node)?

My simplified question is it mandatory to replicate the master node configuration files in slave nodes also? Please help me out on this..

BruceWayne

2,3812 gold badges16 silver badges30 bronze badges

M.PrabhuM.Prabhu

2 Answers

To add datanode check below

-

Copy

core-site.xml, hdfs-site.xml, mapred-site.xml, yarn-site.xml and hadoop-env.shfiles to new machine hadoop dir -

Add ip address or hostname in /etc/hosts

-

Add ip address of the new datanode in

slaves file(located in/etc/hadoop/) -

As you mentioned you have 2 hdd, mention those locations in

hdfs-site.xmlfile like below<name>dfs.datanode.data.dir<name><property>/hdd1,/hdd2<property> - Restart the services

2,3812 gold badges16 silver badges30 bronze badges

You just need to copy entire hadoop folder from node1 to node2 . So in both configuration should point hdfs://CP000187:9000 . You dont have to do any addition settings in node2 .

To start datanode in node2 run (From sbin) .You need run only datanode and nodemanager process in node2

To check whether datanode is added correct or not , run dfsadmin -report in node1

Output :

sterin jacobsterin jacob

RSS Feed

RSS Feed